Introduction

Welcome to the Gofer documentation! This documentation is a reference for all available features and options of Gofer.

- To kick the tires visit: Getting Started

- To read more about Gofer's Feature set visit: Features

- To understand the why of Gofer visit: Philosophy

Gofer: Run short-lived jobs easily.

Gofer is an opinionated, streamlined automation engine designed for the cloud-native era. It specializes in executing your custom scripts in a containerized environment, making it versatile for both developers and operations teams. Deploy Gofer effortlessly as a single static binary, and manage it using expressive, declarative configurations written in real programming languages. Once set up, Gofer takes care of scheduling and running your automation tasks—be it on Nomad, Kubernetes, or even Local Docker.

Its primary function is to execute short-term jobs like code linting, build automation, testing, port scanning, ETL operations, or any task you can containerize and trigger based on events.

Features:

- Simple Deployment: Install Gofer effortlessly with a single static binary and manage it through its intuitive command-line interface.

- Language Flexibility: Craft your pipelines in programming languages you're already comfortable with, such as Go or Rust—no more wrestling with unfamiliar YAML.

- Local Testing: Validate and run your pipelines locally, eliminating the guesswork of "commit and see" testing.

- Extensible Architecture: Easily extend Gofer's capabilities by writing your own plugins, backends, and more, in any language via gRPC.

- Built-In Storage: Comes with an integrated Object and Secret store for your convenience.

- DAG Support: Harness the power of Directed Acyclic Graphs (DAGs) for complex workflow automation.

- Robust Reliability: Automatic versioning, Blue/Green deployments, and canary releases ensure the stability and dependability of your pipelines.

Demo:

Documentation & Getting Started

If you want to fully dive into Gofer, check out the documentation site!

Install

Extended installation information is available through the documentation site.

Download a specific release:

You can view and download releases by version here.

Download the latest release:

- Linux:

wget https://github.com/clintjedwards/gofer/releases/latest/download/gofer

Build from source:

You'll need to install protoc and its associated golang/grpc modules first

git clone https://github.com/clintjedwards/gofer && cd gofermake build OUTPUT=/tmp/gofer

The Gofer binary comes with a CLI to manage the server as well as act as a client.

Dev Setup

Gofer is setup such that the base run mode is the development mode. So simply running the binary without any additional flags allows easy authless development.

You'll need to install the following first:

To run Gofer dev mode:

To build protocol buffers:

Run from the Makefile

Gofer uses flags, env vars, and files to manage configuration (in order of most important). The Makefile already includes all the commands and flags you need to run in dev mode by simply running make run.

In case you want to run without the make file simply run:

export GOFER_LOG_LEVEL=debug

go build -o /tmp/$gofer

/tmp/gofer service start --dev-mode

Editing Protobufs

Gofer uses grpc and protobufs to communicate with both plugins and provide an external API. These protobuf

files are located in /proto. To compile new protobufs once the original .proto files have changed you can use the make build-protos command.

Editing Documentation

Documentation is done with mdbook.

To install:

cargo install mdbook

cargo install mdbook-linkcheck

Once you have mdbook you can simply run make run-docs to give you an auto-reloading dev version of the documentation in a browser.

Regenerating Demo Gif

The Gif on the README page uses vhs; a very handy tool that allows you to write a configuration file which will pop out a gif on the other side.

In order to do this VHS has to run the commands so we must start the server first before we regenerate the gif.

rm -rf /tmp/gofer* # Start with a fresh database

make run # Start the server in dev mode

cd documentation/src/assets

vhs < demo.tape # this will start running commands against the server and output the gif as demo.gif.

Authors

- Clint Edwards - Github

This software is provided as-is. It's a hobby project, done in my free time, and I don't get paid for doing it.

How does Gofer work?

Gofer works in a very simple client-server model. You deploy Gofer as a single binary to your favorite VPS and you can configure it to connect to all the tooling you currently use to run containers.

Gofer acts as a scheduling middle man between a user's intent to run a container at the behest of an event and your already established container orchestration system.

Workflow

Interaction with Gofer is mostly done through its command line interface which is included in the same binary as the master service.

General Workflow

- Gofer is connected to a container orchestrator of some sort. This can be just your local docker service or something like K8s or Nomad.

- It launches it's configured extensions (extensions are just docker containers) and these extensions wait for events to happen.

- Users create pipelines (by configuration file) that define exactly in which order and what containers they would like to run.

- These pipelines don't have to, but usually involve extensions so that pipelines can run automatically.

- Either by extension or manual intervention a pipeline run will start and schedule the containers defined in the configuration file.

- Gofer will collect the logs, exit code, and other essentials from each container run and provide them back to the user along with summaries of how that particular run performed.

Extension Implementation

- When Gofer launches the first thing it does is create the extension containers the same way it schedules any other container.

- The extension containers are all small GRPC services that are implemented using a specific interface provided by the SDK.

- Gofer passes the extension a secret value that only it knows so that the extension doesn't respond to any requests that might come from other sources.

- After the extension is initialized Gofer will subscribe any pipelines that have requested this extension (through their pipeline configuration file) to that extension.

- The extension then takes note of this subscription and waits for the relevant event to happen.

- When the event happens it figures out which pipeline should be alerted and sends an event to the main Gofer process.

- The main gofer process then starts a pipeline run on behalf of the extension.

Glossary

-

Pipeline: A pipeline is a collection of tasks that can be run at once. Pipelines can be defined via a pipeline configuration file. Once you have a pipeline config file you can create a new pipeline via the CLI (recommended) or API.

-

Run: A run is a single execution of a pipeline. A run can be started automatically via extensions or manually via the API or CLI

-

Extension: A extension allow for the extension of pipeline functionality. Extension start-up with Gofer as long running docker containers and pipelines can subscribe to them to have additional functionality.

-

Task: A task is the lowest unit in Gofer. It is a small abstraction over running a single container. Through tasks you can define what container you want to run, when to run it in relation to other containers, and what variables/secrets those containers should use.

-

Task Run: A task run is an execution of a single task container. Referencing a specific task run is how you can examine the results, logs, and details of one of your tasks.

FAQ

> I have a job that works with a remote git repository, other CI/CD tools make this trivial, how do I mimic that?

The drawback of this model and architecture is does not specifically cater to GitOps. So certain workflows that come out of the box from other CI/CD tooling will need to be recreated, due to its inherently distributed nature.

Gofer has provided several tooling options to help with this.

There are two problems that need to be solved around the managing of git repositories for a pipeline:

1) How do I authenticate to my source control repository?

Good security practice suggests that you should be managing repository deploy keys, per repository, per team. You can potentially forgo the "per team" suggestion using a "read-only" key and the scope of things using the key isn't too big.

Gofer's suggestion here is to make deploy keys self service and then simply enter them into Gofer's secret store to be used by your pipeline's tasks. Once there you can then use it in each job to pull the required repository.

2) How do I download the repository?

Three strategies:

- Just download it when you need it. Depending on the size of your repository and the frequency of the pull, this can work absolutely fine.

- Use the object store as a cache. Gofer provides an object store to act as a permanent (pipeline-level) or short-lived (run-level) cache for your workloads. Simply store the repository inside the object store and pull down per job as needed.

- Download it as you need it using a local caching git server. Once your repository starts becoming large or you do many

pulls quickly it might make more sense to use a cache1,2. It also makes sense to only download what you

need using git tools like

sparse checkout

https://github.com/google/goblet 2: https://github.com/jonasmalacofilho/git-cache-http-server

Feature Guide

Write your pipelines in a real programming language.

Other infrastructure tooling tried configuration languages(yaml, hcl).... and they kinda suck1. The Gofer CLI allows you to create your pipelines in a fully featured programming language. Pipelines can be currently be written in Go or Rust2.

DAG(Directed Acyclic Graph) Support.

Gofer provides the ability to run your containers in reference to your other containers.

With DAG support you can run containers:

- In parallel.

- After other containers.

- When particular containers fail.

- When particular containers succeed.

GRPC API

Gofer uses GRPC and Protobuf to construct its API surface. This means that Gofer's API is easy to use, well defined, and can easily be developed for in any language.

The use of Protobuf gives us two main advantages:

- The most up-to-date API contract can always be found by reading the .proto files included in the source.

- Developing against the API for developers working within Golang/Rust simply means importing the autogenerate proto package.

- Developing against the API for developers not working within the Go/Rust language means simply importing the proto files and generating them for the language you need.

You can find more information on protobuf, proto files, and how to autogenerate the code you need to use them to develop against Gofer in the protobuf documentation.

Namespaces

Gofer allows you to separate out your pipelines into different namespaces, allowing you to organize your teams and set permissions based on those namespaces.

Extensions

Extensions are the way users can add extra functionality to their pipelines. For instance the ability to automate their pipelines by waiting on bespoke events (like the passage of time).

Extensions are nothing more than docker containers themselves that talk to the main process when they require activity.

Gofer out of the box provides some default extensions like cron and interval. But even more powerful than that, it accepts any type of extension you can think up and code using the included SDK.

Extensions are brought up alongside Gofer as long-running docker containers that it launches and manages.

Object Store

Gofer provides a built in object store you can access with the Gofer CLI. This object store provides a caching and data transfer mechanism so you can pass values from one container to the next, but also store objects that you might need for all containers.

Secret Store

Gofer provides a built in secret store you can access with the Gofer CLI. This secret store provides a way to pass secret values needed by your pipeline configuration into Gofer.

Events

Gofer provides a list of events for the most common actions performed. You can view this event stream via the Gofer API, allowing you to build on top of Gofer's actions and even using Gofer as a trigger for other events.

External Events

Gofer allows extensions to consume external events. This allows for extensions to respond to webhooks from favorite sites like Github and more.

Pluggable Everything

Gofer plugs into all your favorite backends your team is already using. This means that you never have to maintain things outside of your wheelhouse.

Whether you want to schedule your containers on K8s or AWS Lambda, or maybe you'd like to use an object store that you're more familiar with in minio or AWS S3, Gofer provides either an already created plugin or an interface to write your own.

Initally why configuration languages are used made sense, namely lowering the bar for users who might not know how to program and making it simplier overall to maintain(read: not shoot yourself in the foot with crazy inheritance structures). But, in practice, we've found that they kinda suck. Nobody wants to learn yet another language for this one specific thing. Furthermore, using a separate configuration language doesn't allow you to plug into years of practice/tooling/testing teams have with a certain favorite language.

All pipelines eventualy reduce to protobuf so technically given the correct libraries your pipelines can be written in any language you like!

Via GRPC.

Best Practices

In order to schedule workloads on Gofer your code will need to be wrapped in a docker container. This is a short workflow blurb about how to create containers to best work with Gofer.

1) Write your code to be idempotent.

Write your code in whatever language you want, but it's a good idea to make it idempotent. Gofer does not guarantee single container runs (but even if it did that doesn't prevent mistakes from users).

2) Follow 12-factor best practices.

Configuration is the important one. Gofer manages information into containers by environment variables so your code will need to take any input or configuration it needs from environment variables.

3) Keep things simple.

You could, in theory, create a super complicated graph of containers that run off each other. But the main theme of Gofer is simplicity. Make sure you're thinking through the benefits of managing something in separate containers vs just running a monolith container. There are good reasons for both; always err on the side of clarity and ease of understanding.

4) Keep your containers lean.

Because of the potentially distributed nature of Gofer, the larger the containers you run, the greater potential lag time between the start of execution for your container. This is because there is no guarantee that your container will end up on a machine that already has the image. Downloading large images takes a lot of time and a lot of disk space.

Troubleshooting Gofer

This page provides various tips on how to troubleshoot and find issues/errors within Gofer.

Debugging extensions

Extensions are simply long running docker containers that internally wait for an event to happen and then communicate with Gofer it's API.

Debugging information coming soon.

Debugging Tasks

When tasks aren't working quite right, it helps to have some simple tasks that you can use to debug. Gofer provides a few of these to aid in debugging.

| Name | Image | Description |

|---|---|---|

| envs | ghcr.io/clintjedwards/gofer/debug/envs | Simply prints out all environment variables found |

| fail | ghcr.io/clintjedwards/gofer/debug/fail | Purposely exist with a non-zero exit code. Useful for testing that pipeline failures or alerting works correctly. |

| log | ghcr.io/clintjedwards/gofer/debug/log | Prints a couple paragraphs of log lines with 1 second in-between, useful as a container that takes a while to finish and testing that log following is working correctly |

| wait | ghcr.io/clintjedwards/gofer/debug/wait | Wait a specified amount of time and then successfully exits. |

Gofer's Philosophy

Things should be simple, easy, and fast. For if they are not, people will look for an alternate solution.

Gofer focuses on the usage of common docker containers to run workloads that don't belong as long-running applications. The ability to run containers easily is powerful tool for users who need to run various short-term workloads and don't want to care about the idiosyncrasies of the tooling that they run on top of.

How do I use Gofer? What's a common workflow?

- Create a docker container with the workload/code you want to run.

- Create a configuration file (kept with your workload code) in which you tell Gofer what containers to run and when they should be run.

- Gofer takes care of the rest!

What problem is Gofer attempting to solve?

The current landscape for running short-term jobs is heavily splintered and could do with some centralization and sanity.

1) Tooling in this space is often CI/CD focused and treats gitops as a core tenet.

Initially this is really good, Gitops is something most companies should embrace. But eventually as your workload grows you'll notice that you'll want/need to have a little more control over your short term workloads without setting up complicated release scheduling.

2) Tooling in this space can lack testability.

Ever set up a CI/CD pipeline for your team and end up with a string of commits simply testing or fixing bugs in your assumptions of the system? This is usually due to not understanding how the system works, what values it will produce, or testing being difficult.

These are issues because most CI/CD systems make it hard to test locally. In order to support a wide array of job types(and lean toward being fully gitops focused) most of them run custom agents which in turn run the jobs you want.

This can be bad, since it's usually non-trivial to understand exactly what these agents will do once they handle your workload. Dealing with these agents can also be an operational burden. Operators are generally unfamiliar with these custom agents and it doesn't play to the strengths of an ops team that is already focused on other complex systems.

Gofer leverages schedulers which work locally and are already native to your environment, so testing locally is never far away!

3) Tooling in this space can lack simplicity.

Some user experience issues I've run into using other common CI/CD tooling:

- 100 line bash script (filled with sed and awk) to configure the agent's environment before my workload was loaded onto it.

- Debugging docker in docker issues.

- Reading the metric shit ton of documentation just to get a project started, only to realize everything is proprietary.

- Trying to understand a groovy script nested so deep it got into one of the layers of hell.

- Dealing with the security issues of a way too permissive plugin system.

- Agents giving vague and indecipherable errors to why my job failed.

Gofer aims to use tooling that users are already are familiar with and get out of the way. Running containers should be easy. Forgoing things like custom agents and being opinionated in how workloads should be run, allows users to understand the system immediately and be productive quickly.

Familiar with the logging, metrics, and container orchestration of a system you already use? Great! Gofer will fit right in.

Why should you not use Gofer?

1) You need to simply run tests for your code.

While Gofer can do this, the gitops process really shines here. I'd recommend using any one of the widely available gitops focused tooling. Attempting to do this with Gofer will require you to recreate some of the things these tools give you for free, namely git repository management and automatic deployments.

2) The code you run is not idempotent.

Gofer does not guarantee a single run of a container. Even though it does a good job in best effort, a perfect storm of operator error, extension errors, or sudden shutdowns could cause multiple runs of the same container.

3) The code you run does not follow cloud native best practices.

The easiest primer on cloud native best practices is the 12-factor guide, specifically the configuration section. Gofer provides tooling for container to operate following these guidelines with the most important being that your code will need to take configuration via environment variables.

4) The scheduling you need is precise.

Gofer makes a best effort to start jobs on their defined timeline, but it is at the mercy of many parts of the system (scheduling lag, image download time, competition with other pipelines). If you need precise down to the second or minute runs of code Gofer does not guarantee such a thing.

Gofer works better when jobs are expected to run +1 to +5 mins of their scheduled event/time.

Why not use <insert favorite tool> instead ?

| Tool | Category | Why not? |

|---|---|---|

| Jenkins | General thing-doer | Supports generally anything you might want to do ever, but because of this it can be operationally hard to manage, usually has massive security issues and isn't by default opinionated enough to provide users a good interface into how they should be managing their workloads. |

| Buildkite/CircleCI/Github actions/etc | Gitops cloud builders | Gitops focused cloud build tooling is great for most situations and probably what most companies should start out using. The issue is that running your workloads can be hard to test since these tools use custom agents to manage those jobs. This causes local testing to be difficult as the custom agents generally work very differently locally. Many times users will fight with yaml and make commits just to test that their job does what they need due to their being no way to determine that beforehand. |

| ArgoCD | Kubernetes focused CI/CD tooling | In the right direction with its focus on running containers on already established container orchstrators, but Argo is tied to gitops making it hard to test locally, and also closely tied to Kubernetes. |

| ConcourseCI | Container focused thing do-er | Concourse is great and where much of this inspiration for this project comes from. It sports a sleek CLI, great UI, and cloud-native primatives that makes sense. The drawback of concourse is that it uses a custom way of managing docker containers that can be hard to reason about. This makes testing locally difficult and running in production means that your short-lived containers exist on a platform that the rest of your company is not used to running containers on. |

| Airflow | ETL systems | I haven't worked with large scale data systems enough to know deeply about how ETL systems came to be, but (maybe naively) they seem to fit into the same paradigm of "run x thing every time y happens". Airflow was particularly rough to operate in the early days of its release with security and UX around DAG runs/management being nearly non-existent. As an added bonus the scheduler regularly crashed from poorly written user workloads making it a reliability nightmare. Additionally, Airflow's models of combining the execution logic of your DAGs with your code led to issues of testing and iterating locally. Instead of having tooling specifically for data workloads, instead it might be easier for both data teams and ops teams to work in the model of distributed cron as Gofer does. Write your stream processing using dedicated tooling/libraries like Benthos (or in whatever language you're most familiar with), wrap it in a Docker container, and use Gofer to manage which containers should run when, where, and how often. This gives you easy testing, separation of responsibilities, and no python decorator spam around your logic. |

| Cadence | ETL systems | I like Uber's cadence, it does a great job at providing a platform that does distributed cron and has some really nifty features by choosing to interact with your workflows at the code level. The ability to bake in sleeps and polls just like you would regular code is awesome. But just like Airflow, I don't want to marry my scheduling platform with my business logic. I write the code as I would for a normal application context and I just need something to run that code. When we unmarry the business logic and the scheduling platform we are able to treat it just like we treat all our other code, which means code workflows(testing, for example) we were all already used to and the ability to foster code reuse for these same processes. To test Uber's cadence you'll need to bring up a copy of it. to test Gofer you can simply test the code in the container. Gofer doesn't force you to change anything about your code at all. |

Getting Started

Let's start by setting up our first Gofer pipeline!

Installing Gofer

Gofer comes as an easy to distribute pre-compiled binary that you can run on your machine locally, but you can always build Gofer from source if need be.

Pre-compiled (Recommended)

You can download the latest version for linux here:

wget https://github.com/clintjedwards/gofer/releases/latest/download/gofer

From Source

Gofer contains protobuf assets which will not get compiled if used via go install.

To solve for this we can use make to build ourselves an impromptu version.

git clone https://github.com/clintjedwards/gofer && cd gofer

make build OUTPUT=/tmp/gofer SEMVER=0.0.dev

/tmp/gofer --version

Running the Server Locally

Gofer is deployed as a single static binary allowing you to run the full service locally so you can play with the internals before committing resources to it. Spinning Gofer up locally is also a great way to debug "what would happen if?" questions that might come up during the creation of pipeline config files.

Install Gofer

Install Docker

The way in which Gofer runs containers is called a Scheduler. When deploying Gofer at scale we can deploy it with a more serious container scheduler (Nomad, Kubernetes) but for now we're just going to use the default local docker scheduler included. This simply uses your local instance of docker instance to run containers.

But before we use your local docker service... you have to have one in the first place. If you don't have docker installed, the installation is quick. Rather than covering the specifics here you can instead find a guide on how to install docker for your operating system on its documentation site.

Start the server

By default the Gofer binary is able to run the server in development mode. Simply start the service by:

gofer service start --dev-mode

🪧 The Gofer CLI has many useful commands, try running

gofer -hto see a full listing.

Create Your First Pipeline Configuration

Before you can start running containers you must tell Gofer what you want to run. To do this we create what is called a pipeline configuration.

The creation of this pipeline configuration is very easy and can be done in either Golang or Rust. This allows you to use a fully-featured programming language to organize your pipelines, instead of dealing with YAML mess.

Let's Go!

As an example, let's just copy a pipeline that has been given to us already. We'll use Go as our language, which means you'll need to install it if you don't have it. The Gofer repository gives us a simple pipeline that we can copy and use.

Let's first create a folder where we'll put our pipeline:

mkdir /tmp/simple_pipeline

Then let's copy the Gofer provided pipeline's main file into the correct place:

cd /tmp/simple_pipeline

wget https://raw.githubusercontent.com/clintjedwards/gofer/main/examplePipelines/go/simple/main.go

This should create a main.go file inside our /tmp/simple_pipeline directory.

Lastly, let's initialize the new Golang program:

To complete our Go program we simply have to initialize it with the go mod command.

go mod init test/simple_pipeline

go mod tidy

The pipeline we generated above gives you a very simple pipeline with a few pre-prepared testing docker containers. You should be able to view it using your favorite IDE.

The configuration itself is very simple. Essentially a pipeline contains of a few parts:

> Some basic attributes so we know what to call it and how to document it.

err := sdk.NewPipeline("simple", "Simple Pipeline").

Description("This pipeline shows off a very simple Gofer pipeline that simply pulls in " +

...

> The containers we want to run are defined through tasks.

...

sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exits!").

Command("echo", "Hello from Gofer!").Variable("test", "sample"),

...

> And when we want to automate when the pipeline runs automatically, we can do that through extensions.

Register your pipeline

Now we will register your newly created pipeline configuration with Gofer!

More CLI to the rescue

From your terminal, lets use the Gofer binary to run the following command, pointing Gofer at your newly created pipeline folder:

gofer up ./tmp/simple_pipeline

Examine created pipeline

It's that easy!

The Gofer command line application uses your local Golang compiler to compile, parse, and upload your pipeline configuration to Gofer.

You should have received a success message and some suggested commands:

✓ Created pipeline: [simple] "Simple Pipeline"

View details of your new pipeline: gofer pipeline get simple

Start a new run: gofer run start simple

We can view the details of our new pipeline by running:

gofer pipeline get simple

If you ever forget your pipeline ID you can list all pipelines that you own by using:

gofer pipeline list

Start a Run

Now that we've set up Gofer, defined our pipeline, and registered it we're ready to actually run our containers.

Press start

gofer pipeline run simple

What happens now?

When you start a run Gofer will attempt to schedule all your tasks according to their dependencies onto your chosen scheduler. In this case that scheduler is your local instance of Docker.

Your run should be chugging along now!

View a list of runs for your pipeline:

gofer run list simple

View details about your run:

gofer run get simple 1

List the containers that executed during the run:

gofer taskrun list simple 1

View a particular container's details during the run:

gofer taskrun get simple 1 <task_id>

Stream a particular container's logs during the run:

gofer taskrun logs simple 1 <task_id>

What's Next?

Anything!

- Keep playing with Gofer locally and check out all the CLI commands.

- Spruce up your pipeline definition!

- Learn more about Gofer terminology.

- Deploy Gofer for real. Pair it with your favorite scheduler and start using it to automate your jobs.

Pipeline Configuration

A pipeline is a directed acyclic graph of tasks that run together. A single execution of a pipeline is called a run. Gofer allows users to configure their pipeline via a configuration file written in Golang or Rust.

The general hierarchy for a pipeline is:

pipeline

\_ run

\_ task

Each execution of a pipeline is a run and every run consists of one or more tasks (containers). These tasks are where users specify their containers and settings.

SDK

Creating a pipeline involves using Gofer's SDK currently written in Go or Rust.

Extensive documentation can be found on the SDK's reference page. There you will find most of the features and idiosyncrasies available to you when creating a pipeline.

Small Walkthrough

To introduce some of the concepts slowly, lets build a pipeline step by step. We'll be using Go as our pipeline configuration language and this documentation assumes you've already set up a new Go project and are operating in a main.go file. If you haven't you can set up one following the guide instructions.

A Simple Pipeline

Every pipeline is initialized with a simple pipeline declaration. It's here that we will name our pipeline, giving it a machine referable ID and a human referable name.

err := sdk.NewPipeline("simple", "My Simple Pipeline")

It's important to note here that while your human readable name ("My Simple Pipeline" in this case) can contain a large array of characters the ID can only container alphanumeric letters, numbers, and underscores. Any other characters will result in an error when attempting to register the pipeline.

Add a Description

Next we'll add a simple description to remind us what this pipeline is used for.

err := sdk.NewPipeline("simple", "My Simple Pipeline").

Description("This pipeline is purely for testing purposes.")

The SDK uses a builder pattern, which allows us to simply add another function onto our Pipeline object which we can type our description into.

Add a task

Lastly let's add a task(container) to our pipeline. We'll add a simple ubuntu container and change the command that gets run on container start to just say "Hello from Gofer!".

err := sdk.NewPipeline("simple", "My Simple Pipeline").

Description("This pipeline is purely for testing purposes.").

Tasks(sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exists!").

Command("echo", "Hello from Gofer!"),

)

We used the Tasks function to add multiple tasks and then we use the SDK's NewTask function to create a task. You can see we:

- Give the task an ID, much like our pipeline earlier.

- Specify which image we want to use.

- Tack on a description.

- And then finally specify the command.

To tie a bow on it, we add the .Finish() function to specify that our pipeline is in it's final form.

err := sdk.NewPipeline("my_pipeline", "My Simple Pipeline").

Description("This pipeline is purely for testing purposes.").

Tasks(sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exists!").

Command("echo", "Hello from Gofer!"),

).Finish()

That's it! This is a fully functioning pipeline.

You can run and test this pipeline much like you would any other code you write. Running it will produce a protobuf binary output which Gofer uses to pass to the server.

Full Example

package main

import (

"log"

sdk "github.com/clintjedwards/gofer/sdk/go/config"

)

func main() {

err := sdk.NewPipeline("simple", "Simple Pipeline").

Description("This pipeline shows off a very simple Gofer pipeline that simply pulls in " +

"a container and runs a command. Veterans of CI/CD tooling should be familiar with this pattern.\n\n" +

"Shown below, tasks are the building blocks of a pipeline. They represent individual containers " +

"and can be configured to depend on one or multiple other tasks.\n\n" +

"In the task here, we simply call the very familiar Ubuntu container and run some commands of our own.\n\n" +

"While this is the simplest example of Gofer, the vision is to move away from writing our logic code " +

"in long bash scripts within these task definitions.\n\n" +

"Ideally, these tasks are custom containers built with the purpose of being run within Gofer for a " +

"particular workflow. Allowing you to keep the logic code closer to the actual object that uses it " +

"and keeping the Gofer pipeline configurations from becoming a mess.\n").

Tasks(

sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exits!").

Command("echo", "Hello from Gofer!").Variable("test", "sample"),

).Finish()

if err != nil {

log.Fatal(err)

}

}

Extra Examples

Auto Inject API Tokens

Gofer has the ability to auto-create and inject a token into your tasks. This is helpful if you want to use the Gofer CLI or the Gofer API to communicate with Gofer at some point in your task.

You can tell Gofer to do this by using the InjectAPIToken function for a particular task.

The token will be cleaned up the same time the logs for a particular run is cleaned up.

err := sdk.NewPipeline("my_pipeline", "My Simple Pipeline").

Description("This pipeline is purely for testing purposes.").

Tasks(

sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exists!").

Command("echo", "Hello from Gofer!").InjectAPIToken(true),

).Finish()

Tasks

Gofer's abstraction for running a container is called a Task. Specifically Tasks are containers you point Gofer to and configure to perform some workload.

A Task can be any Docker container you want to run. In the Getting Started example we take a regular standard ubuntu:latest container and customize it to run a passed in bash script.

Tasks(

sdk.NewTask("simple_task", "ubuntu:latest").

Description("This task simply prints our hello-world message and exists!").

Command("echo", "Hello from Gofer!"),

)

Task Environment Variables and Configuration

Gofer handles container configuration the cloud native way. That is to say every configuration is passed in as an environment variable. This allows for many advantages, the greatest of which is standardization.

As a user, you pass your configuration in via the Variable(s) flavor of functions in your pipeline-config.

When a container is run by Gofer, the Gofer scheduler has the potential to pass in configuration from multiple sources1:

-

Your pipeline configuration: Configs you pass in by using the

Variable(s)functions. -

Extension/Manual configurations: Extensions are allowed to pass in custom configuration for a run. Usually this configuration gives extra information the run might need. (For example, the git commit that activated the extension.).

Alternatively, if this run was not activated by a extension and instead kicked of manually, the user who launched the run might opt to pass in configuration at that runtime.

-

Gofer's system configurations: Gofer will pass in system configurations that might be helpful to the user. (For example, what current pipeline is running.)2

The exact key names injected for each of these configurations can be seen on any taskrun by getting that taskrun's details: gofer taskrun get <pipeline_name> <run_id>

These sources are ordered from most to least important. Since the configuration is passed in a "Key => Value" format any conflicts between sources will default to the source with the greater importance. For instance, a pipeline config with the key GOFER_PIPELINE_ID will replace the key of the same name later injected by the Gofer system itself.

The current Gofer system injected variables can be found here. Below is a possibly out of date short reference:

| Key | Description |

|---|---|

GOFER_PIPELINE_ID | The pipeline identification string. |

GOFER_RUN_ID | The run identification number. |

GOFER_TASK_ID | The task run identification string. |

GOFER_TASK_IMAGE | The image name the task is currently running with. |

GOFER_API_TOKEN | Optional. Runs can be assigned a unique Gofer API token automatically. This makes it easy and manageable for tasks to query Gofer's API and do lots of other convenience tasks. |

What happens when a task is run?

The high level flow is:

- Gofer checks to make sure your task configuration is valid.

- Gofer parses the task configuration's variables list. It attempts replace any substitution variables with their actual values from the object or secret store.

- Gofer then passes the details of your task to the configured scheduler, variables are passed in as environment variables.

- Usually this means the scheduler will take the configuration and attempt to pull the

imagementioned in the configuration. - Once the image is successfully pulled the container is then run with the settings passed.

Server Configuration

Gofer runs as a single static binary that you deploy onto your favorite VPS.

While Gofer will happily run in development mode without any additional configuration, this mode is NOT recommended for production workloads and not intended to be secure.

Instead Gofer allows you to edit it's startup configuration allowing you to configure it to run on your favorite container orchestrator, object store, and/or storage backend.

Setup

There are a few steps to setting up the Gofer service for production:

1) Configuration

First you will need to properly configure the Gofer service.

Gofer accepts configuration through environment variables or a configuration file. If a configuration key is set both in an environment variable and in a configuration file, the value of the environment variable's value will be the final value.

You can view a list of environment variables Gofer takes by using the gofer service start -h command. It's important to note that each environment variable starts with a prefix of GOFER_. So setting the host configuration can be set as:

export GOFER_SERVER__HOST=localhost:8080

Configuration file

The Gofer service configuration file is written in HCL.

Load order

The Gofer service looks for its configuration in one of several places (ordered by first searched):

- Path given through the

GOFER_CONFIG_PATHenvironment variable - /etc/gofer/gofer.hcl

🪧 You can generate a sample Gofer configuration file by using the command:

gofer service init-config

Bare minimum production file

These are the bare minimum values you should populate for a production ready Gofer configuration.

The values below should be changed depending on your environment; leaving them as they currently are will lead to loss of data on server restarts.

🪧 To keep your deployment of Gofer safe make sure to use your own TLS certificates instead of the default localhost ones included.

// Gofer Service configuration file is used as an alternative to providing the server configurations via envvars.

// You can find an explanation of these configuration variables and where to put this file so the server can read this

// file in the documenation: https://clintjedwards.com/gofer/ref/server_configuration/index.html

ignore_pipeline_run_events = false

run_parallelism_limit = 200

pipeline_version_limit = 5

event_log_retention = "4380h"

event_prune_interval = "3h"

log_level = "info"

task_run_log_expiry = 50

task_run_logs_dir = "/tmp"

task_run_stop_timeout = "5m"

external_events_api {

enable = true

host = "localhost:8081"

}

object_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-object.db"

}

pipeline_object_limit = 50

run_object_expiry = 50

}

secret_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-secret.db"

encryption_key = "changemechangemechangemechangeme"

}

}

scheduler {

engine = "docker"

docker {

prune = true

prune_interval = "24h"

}

}

server {

host = "localhost:8080"

shutdown_timeout = "15s"

tls_cert_path = "./localhost.crt"

tls_key_path = "./localhost.key"

storage_path = "/tmp/gofer.db"

storage_results_limit = 200

}

extensions {

install_base_extensions = true

stop_timeout = "5m"

tls_cert_path = "./localhost.crt"

tls_key_path = "./localhost.key"

}

2) Running the binary

You can find the most recent releases of Gofer on the github releases page..

Simply use whatever configuration management system you're most familiar with to place the binary on your chosen VPS and manage it. You can find a quick and dirty wget command to pull the latest version in the getting started documentation.

As an example a simple systemd service file setup to run Gofer is show below:

Example systemd service file

[Unit]

Description=gofer service

Requires=network-online.target

After=network-online.target

[Service]

Restart=on-failure

ExecStart=/usr/bin/gofer service start

ExecReload=/bin/kill -HUP $MAINPID

[Install]

WantedBy=multi-user.target

3) First steps

You will notice upon service start that the Gofer CLI is unable to make any requests due to permissions.

You will first need to handle the problem of auth. Every request to Gofer must use an API key so Gofer can appropriately direct requests.

More information about auth in general terms can be found here.

To create your root management token use the command: gofer service token bootstrap

🪧 The token returned is a management token and as such as access to all routes within Gofer. It is advised that:

- You use this token only in admin situations and to generate other lesser permissioned tokens.

- Store this token somewhere safe

From here you can use your root token to provision extra lower permissioned tokens for everyday use.

When communicating with Gofer through the CLI you can set the token to be automatically passed per request in one of many ways.

Configuration Reference

Gofer has a variety of parameters that can be specified via environment variables or the configuration file.

To view a list of all possible environment variables simply type: gofer service start -h.

The most up to date config file values can be found by reading the code or running the command above, but a best effort key and description list is given below.

If examples of these values are needed you can find a sample file by using gofer service init-config.

Values

General

| name | type | default | description |

|---|---|---|---|

| event_log_retention | string (duration) | 4380h | Controls how long Gofer will hold onto events before discarding them. This is important factor in disk space and memory footprint. Example: Rough math on a 5,000 pipeline Gofer instance with a full 6 months of retention puts the memory and storage footprint at about 9GB. |

| event_prune_interval | string | 3h | How often to check for old events and remove them from the database. |

| ignore_pipeline_run_events | boolean | false | Controls the ability for the Gofer service to execute jobs on startup. If this is set to false you can set it to true manually using the CLI command gofer service toggle-event-ingress. |

| log_level | string | debug | The logging level that is output. It is common to start with info. |

| run_parallelism_limit | int | N/A | The limit automatically imposed if the pipeline does not define a limit. 0 is unlimited. |

| task_run_logs_dir | string | /tmp | The path of the directory to store task run logs. Task run logs are stored as a text file on the server. |

| task_run_log_expiry | int | 20 | The total amount of runs before logs of the oldest run will be deleted. |

| task_run_stop_timeout | string | 5m | The amount of time Gofer will wait for a container to gracefully stop before sending it a SIGKILL. |

| external_events_api | block | N/A | The external events API controls webhook type interactions with extensions. HTTP requests go through the events endpoint and Gofer routes them to the proper extension for handling. |

| object_store | block | N/A | The settings for the Gofer object store. The object store assists Gofer with storing values between tasks since Gofer is by nature distributed. This helps jobs avoid having to download the same objects over and over or simply just allows tasks to share certain values. |

| secret_store | block | N/A | The settings for the Gofer secret store. The secret store allows users to securely populate their pipeline configuration with secrets that are used by their tasks, extension configuration, or scheduler. |

| scheduler | block | N/A | The settings for the container orchestrator that Gofer will use to schedule workloads. |

| server | block | N/A | Controls the settings for the Gofer API service properties. |

| extensions | block | N/A | Controls settings for Gofer's extension system. Extensions are different workflows for running pipelines usually based on some other event (like the passing of time). |

Development (block)

Special feature flags to make development easier

| name | type | default | description |

|---|---|---|---|

| bypass_auth | boolean | false | Skip authentication for all routes. |

| default_encryption | boolean | false | Use default encryption key to avoid prompting for a unique one. |

| pretty_logging | boolean | false | Turn on human readable logging instead of JSON. |

| use_localhost_tls | boolean | false | Use embedded localhost certs instead of prompting the user to provide one. |

Example

development {

bypass_auth = true

}

``` |

### External Events API (block)

The external events API controls webhook type interactions with extensions. HTTP requests go through the events endpoint and Gofer routes them to the proper extension for handling.

| name | type | default | description |

| ------ | ------- | -------------- | ----------------------------------------------------------------------------------------- |

| enable | boolean | true | Enable the events api. If this is turned off the events http service will not be started. |

| host | string | localhost:8081 | The address and port to bind the events service to. |

#### Example

```hcl

external_events_api {

enable = true

host = "0.0.0.0:8081"

}

Object Store (block)

The settings for the Gofer object store. The object store assists Gofer with storing values between tasks since Gofer is by nature distributed. This helps jobs avoid having to download the same objects over and over or simply just allows tasks to share certain values.

You can find more information on the object store block here.

| name | type | default | description |

|---|---|---|---|

| engine | string | sqlite | The engine Gofer will use to store state. The accepted values here are "sqlite". |

| pipeline_object_limit | int | 50 | The limit to the amount of objects that can be stored at the pipeline level. Objects stored at the pipeline level are kept permanently, but once the object limit is reach the oldest object will be deleted. |

| run_object_expiry | int | 50 | Objects stored at the run level are unlimited in number, but only last for a certain number of runs. The number below controls how many runs until the run objects for the oldest run will be deleted. Ex. an object stored on run number #5 with an expiry of 2 will be deleted on run #7 regardless of run health. |

Sqlite (block)

The sqlite store is a built-in, easy to use object store. It is meant for development and small deployments.

| name | type | default | description |

|---|---|---|---|

| path | string | /tmp/gofer-object.db | The path of the file that sqlite will use. If this file does not exist Gofer will create it. |

| sqlite | block | N/A | The sqlite storage engine. |

object_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-object.db"

}

}

Secret Store (block)

The settings for the Gofer secret store. The secret store allows users to securely populate their pipeline configuration with secrets that are used by their tasks, extension configuration, or scheduler.

You can find more information on the secret store block here.

| name | type | default | description |

|---|---|---|---|

| engine | string | sqlite | The engine Gofer will use to store state. The accepted values here are "sqlite". |

| sqlite | block | N/A | The sqlite storage engine. |

Sqlite (block)

The sqlite store is a built-in, easy to use object store. It is meant for development and small deployments.

| name | type | default | description |

|---|---|---|---|

| path | string | /tmp/gofer-secret.db | The path of the file that sqlite will use. If this file does not exist Gofer will create it. |

| encryption_key | string | "changemechangemechangemechangeme" | Key used to encrypt keys to keep them safe. This encryption key is responsible for facilitating that. It MUST be 32 characters long and cannot be changed for any reason once it is set or else all data will be lost. |

secret_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-secret.db"

encryption_key = "changemechangemechangemechangeme"

}

}

Scheduler (block)

The settings for the container orchestrator that Gofer will use to schedule workloads.

You can find more information on the scheduler block here.

| name | type | default | description |

|---|---|---|---|

| engine | string | sqlite | The engine Gofer will use as a container orchestrator. The accepted values here are "docker". |

| docker | block | N/A | Docker is the default container orchestrator and leverages the machine's local docker engine to schedule containers. |

Docker (block)

Docker is the default container orchestrator and leverages the machine's local docker engine to schedule containers.

| name | type | default | description |

|---|---|---|---|

| prune | boolean | false | Controls if the docker scheduler should periodically clean up old containers. |

| prune_interval | string | 24h | Controls how often the prune container job should run. |

scheduler {

engine = "docker"

docker {

prune = true

prune_interval = "24h"

}

}

Server (block)

Controls the settings for the Gofer service's server properties.

| name | type | default | description |

|---|---|---|---|

| host | string | localhost:8080 | The address and port for the service to bind to. |

| shutdown_timeout | string | 15s | The time Gofer will wait for all connections to drain before exiting. |

| tls_cert_path | string | The TLS certificate Gofer will use for the main service endpoint. This is required. | |

| tls_key_path | string | The TLS certificate key Gofer will use for the main service endpoint. This is required. | |

| storage_path | string | /tmp/gofer.db | Where to put Gofer's sqlite database. |

| storage_results_limit | int | 200 | The amount of results Gofer's database is allowed to return on one query. |

server {

host = "localhost:8080"

tls_cert_path = "./localhost.crt"

tls_key_path = "./localhost.key"

tmp_dir = "/tmp"

storage_path = "/tmp/gofer.db"

storage_results_limit = 200

}

Extensions (block)

Controls settings for Gofer's extension system. Extensions are different workflows for running pipelines usually based on some other event (like the passing of time).

You can find more information on the extension block here.

| name | type | default | description |

|---|---|---|---|

| install_base_extensions | boolean | true | Attempts to automatically install the cron and interval extensions on first startup. |

| stop_timeout | string | 5m | The amount of time Gofer will wait until extension containers have stopped before sending a SIGKILL. |

| tls_cert_path | string | The TLS certificate path Gofer will use for the extensions. This should be a certificate that the main Gofer service will be able to access. | |

| tls_key_path | string | The TLS certificate path key Gofer will use for the extensions. This should be a certificate that the main Gofer service will be able to access. |

extensions {

install_base_extensions = true

stop_timeout = "5m"

tls_cert_path = "./localhost.crt"

tls_key_path = "./localhost.key"

}

Authentication

Gofer's auth system is meant to be extremely lightweight and a stand-in for a more complex auth system.

How auth works

Gofer uses API Tokens for authorization. You pass a given token in whenever talking to the API and Gofer will evaluate internally what type of token you possess and for which namespaces does it possess access.

Management Tokens

The first type of token is a management token. Management tokens essentially act as root tokens and have access to all routes.

It is important to be extremely careful about where your management tokens end up and how they are used.

Other than system administration, the main use of management tokens are the creation of new tokens. You can explore token creation though the CLI.

It is advised that you use a single management token as the root token by which you create all user tokens.

Client Tokens

The most common token type is a client token. The client token simply controls which namespaces a user might have access to.

During token creation you can choose one or multiple namespaces for the token to have access to.

How to auth via the API

The Gofer API uses GRPC's metadata functionality to read tokens from requests:

md := metadata.Pairs("Authorization", "Bearer "+<token>)

How to auth via the CLI

The Gofer CLI accepts many ways of setting a token once you have one.

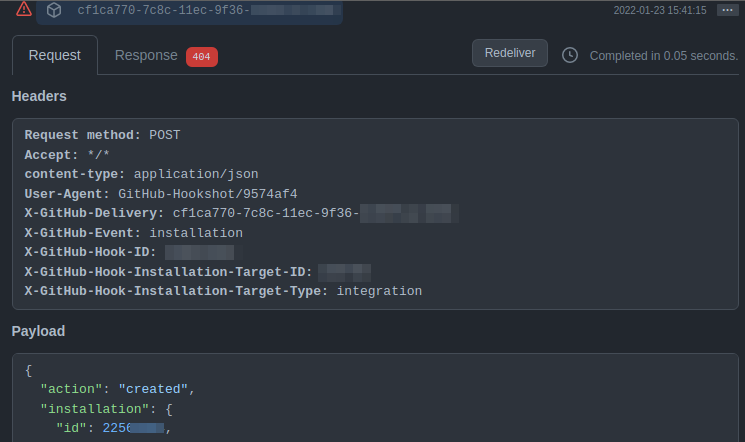

External Events

Gofer has an alternate endpoint specifically for external events streams1. This endpoint takes in http requests from the outside and passes them to the relevant extension.

You can find more about external event configuration in the configuration-values reference.

external_events_api {

enable = true

host = "0.0.0.0:8081"

}

It works like this:

-

When the Gofer service is started it starts the external events service on a separate port per the service configuration settings. It is also possible to just turn off this feature via the same configuration file.

-

External services can send Gofer http requests with payloads and headers specific to the extension they're trying to communicate with. It's possible to target specific extensions by using the

/eventsendpoint.ex: https://mygofer.mydomain.com/events/github <- #extension label -

Gofer serializes and forwards the request to the relevant extension where it is validated for authenticity of sender and then processed.

-

A extension may then handle this external event in any way it pleases. For example, the Github extension takes in external events which are expected to be Github webhooks and starts a pipeline if the event type matches one the user wanted.

The reason for the alternate endpoint is due to the security concerns with sharing the same endpoint as the main API service of the Gofer API. Since this endpoint is different you can now specifically set up security groups such that it is only exposed to IP addresses that you trust without exposing those same address to Gofer as a whole.

Scheduler

Gofer runs the containers you reference in the pipeline configuration via a container orchestrator referred to here as a "scheduler".

The vision of Gofer is for you to use whatever scheduler your team is most familiar with.

Supported Schedulers

The only currently supported scheduler is local docker. This scheduler is used for small deployments and development work.

How to add new Schedulers?

Schedulers are pluggable! Simply implement a new scheduler by following the given interface.

type GetStateResponse struct {

ExitCode int64

State ContainerState

}

type GetLogsRequest struct {

ID string

}

type AttachContainerRequest struct {

ID string

Command []string

}

type AttachContainerResponse struct {

Conn net.Conn

Reader io.Reader

}

type Engine interface {

// StartContainer launches a new container on scheduler.

StartContainer(request StartContainerRequest) (response StartContainerResponse, err error)

// StopContainer attempts to stop a specific container identified by a unique container name. The scheduler

// should attempt to gracefully stop the container, unless the timeout is reached.

StopContainer(request StopContainerRequest) error

// GetState returns the current state of the container translated to the "models.ContainerState" enum.

GetState(request GetStateRequest) (response GetStateResponse, err error)

// GetLogs reads logs from the container and passes it back to the caller via an io.Reader. This io.reader can

// be written to from a goroutine so that they user gets logs as they are streamed from the container.

// Finally once finished the io.reader should be close with an EOF denoting that there are no more logs to be read.

GetLogs(request GetLogsRequest) (logs io.Reader, err error)

// Attach to a running container for debugging or other purposes. Returns a net connection, should be closed when finished.

AttachContainer(request AttachContainerRequest) (response AttachContainerResponse, err error)

}

Docker scheduler

The docker scheduler uses the machine's local docker engine to run containers. This is great for small or development workloads and very simple to implement. Simply download docker and go!

scheduler {

engine = "docker"

docker {

prune = true

prune_interval = "24h"

}

}

Configuration

Docker needs to be installed and the Gofer process needs to have the required permissions to run containers upon it.

Other than that the docker scheduler just needs to know how to clean up after itself.

| Parameter | Type | Default | Description |

|---|---|---|---|

| prune | bool | false | Whether or not to periodically clean up containers that are no longer in use. If prune is not turned on eventually the disk of the host machine will fill up with different containers that have run over time. |

| prune_interval | string(duration) | 24h | How often to run the prune job. Depending on how many containers you run per day this value could easily be set to monthly. |

Object Store

Gofer provides an object store as a way to share values and objects between containers. It can also be used as a cache. It is common for one container to run, generate an artifact or values, and then store that object in the object store for the next container or next run. The object store can be accessed through the Gofer CLI or through the normal Gofer API.

Gofer divides the objects stored into two different lifetime groups:

Pipeline-level objects

Gofer can store objects permanently for each pipeline. You can store objects at the pipeline-level by using the gofer pipeline object store command:

gofer pipeline store put my-pipeline my_key1=my_value5

gofer pipeline store get my-pipeline my_key1

#output: my_value5

The limitation to pipeline level objects is that they have a limit of the number of objects that can be stored per-pipeline. Once that limit is reached the oldest object in the store will be removed for the newest object.

Run-level objects

Gofer can also store objects on a per-run basis. Unlike the pipeline-level objects run-level do not have a limit to how many can be stored, but instead have a limit of how long they last. Typically after a certain number of runs a object stored at the run level will expire and that object will be deleted.

You can access the run-level store using the run level store CLI commands. Here is an example:

gofer run store put simple_pipeline my_key=my_value

gofer run store get simple_pipeline my_key

#output: my_value

Supported Object Stores

The only currently supported object store is the sqlite object store. Reference the configuration reference for a full list of configuration settings and options.

How to add new Object Stores?

Object stores are pluggable! Simply implement a new object store by following the given interface.

type Engine interface {

GetObject(key string) ([]byte, error)

PutObject(key string, content []byte, force bool) error

ListObjectKeys(prefix string) ([]string, error)

DeleteObject(key string) error

}

Sqlite object store

The sqlite object store is great for development and small deployments.

object_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-object.db"

}

}

Configuration

Sqlite needs to create a file on the local machine making the only parameter it accepts a path to the database file.

| Parameter | Type | Default | Description |

|---|---|---|---|

| path | string | /tmp/gofer-object.db | The path on disk to the sqlite db file |

Secret Store

Gofer provides a secret store as a way to enable users to pass secrets into pipeline configuration files.

The secrets included in the pipeline file use a special syntax so that Gofer understands when it is given a secret value instead of a normal variable.

...

env_vars = {

"SOME_SECRET_VAR" = "secret{{my_key_here}}"

}

...

Supported Secret Stores

The only currently supported secret store is the sqlite object store. Reference the configuration reference for a full list of configuration settings and options.

How to add new Secret Stores?

Secret stores are pluggable! Simply implement a new secret store by following the given interface.

type Engine interface {

GetSecret(key string) (string, error)

PutSecret(key string, content string, force bool) error

ListSecretKeys(prefix string) ([]string, error)

DeleteSecret(key string) error

}

Sqlite secret store

The sqlite object store is great for development and small deployments.

secret_store {

engine = "sqlite"

sqlite {

path = "/tmp/gofer-secret.db"

encryption_key = "changemechangemechangemechangeme"

}

}

Configuration

Sqlite needs to create a file on the local machine making the only parameter it accepts a path to the database file.

| Parameter | Type | Default | Description |

|---|---|---|---|

| path | string | /tmp/gofer-secret.db | The path on disk to the sqlite b file |

| encryption_key | string | 32 character key required to encrypt secrets |

Extensions

Extensions are Gofer's way of adding additional functionality to pipelines. You can subscribe your pipeline to an extension, allowing that extension to give your pipeline extra powers.

The most straight-forward example of this, is the interval extension. This extension allows your pipeline to run everytime some amount of time has passed. Let's say you have a pipeline that needs to run every 5 mins. You would subscribe your pipeline to the interval extension using the gofer cli command gofer extension sub internal every_5_seconds set to an interval of 5m.

On startup, Gofer launches the interval extension as a long-running container. When your pipeline subscribes to it. The interval extension starts a timer and when 5 minutes have passed the extension sends an API request to Gofer, causing Gofer to run your pipeline.

Gofer Provided Extensions

You can create your own extensions, but Gofer provides some provided extensions for use.

How do I install a Extension?

Extensions must first be installed by Gofer administrators before they can be used. They can be installed by the CLI. For more information on how to install a specific extension run:

gofer extension install -h

How do I configure a Extension?

Extensions allow for both system and pipeline configuration1. Meaning they have both Global settings that apply to all pipelines and Pipeline specific settings. This is what makes them so dynamically useful!

Pipeline Configuration

Most Extensions allow for some pipeline specific configuration usually referred to as "Parameters" or "Pipeline configuration".

These variables are passed when the user subscribes their pipeline to the extension. Each extension defines what this might be in it's documentation.

System Configuration

Most extensions have system configurations which allow the administrator or system to inject some needed variables. These are defined when the Extension is installed.

See a specific Extension's documentation for the exact variables accepted and where they belong.

How to add new Extensions/ How do I create my own?

Just like tasks, extensions are simply docker containers! Making them easily testable and portable. To create a new extension you simply use the included Gofer SDK.

The SDK provides an interface in which a well functioning GRPC service will be created from your concrete implementation.

// ExtensionServiceInterface provides a light wrapper around the GRPC extension interface. This light wrapper

// provides the caller with a clear interface to implement and allows this package to bake in common

// functionality among all extensions.

type ExtensionServiceInterface interface {

// Init tells the extension it should complete it's initialization phase and return when it is ready to serve requests.

// This is useful because sometimes we'll want to start the extension, but not actually have it do anything

// but serve only certain routes like the installation routes.

Init(context.Context, *proto.ExtensionInitRequest) (*proto.ExtensionInitResponse, error)

// Info returns information on the specific plugin

Info(context.Context, *proto.ExtensionInfoRequest) (*proto.ExtensionInfoResponse, error)

// Subscribe registers a pipeline with said extension to provide the extension's functionality.

Subscribe(context.Context, *proto.ExtensionSubscribeRequest) (*proto.ExtensionSubscribeResponse, error)

// Unsubscribe allows pipelines to remove their extension subscriptions.

Unsubscribe(context.Context, *proto.ExtensionUnsubscribeRequest) (*proto.ExtensionUnsubscribeResponse, error)

// Shutdown tells the extension to cleanup and gracefully shutdown. If a extension

// does not shutdown in a time defined by the Gofer API the extension will

// instead be Force shutdown(SIGKILL). This is to say that all extensions should

// lean toward quick cleanups and shutdowns.

Shutdown(context.Context, *proto.ExtensionShutdownRequest) (*proto.ExtensionShutdownResponse, error)

// ExternalEvent are json blobs of Gofer's /events endpoint. Normally webhooks.

ExternalEvent(context.Context, *proto.ExtensionExternalEventRequest) (*proto.ExtensionExternalEventResponse, error)

// Run the installer that helps admin user install the extension.

RunExtensionInstaller(stream proto.ExtensionService_RunExtensionInstallerServer) error

// Run the installer that helps pipeline users with their pipeline extension

For an commented example of a simple extension you can follow to build your own, view the interval extension:

// Extension interval simply runs the subscribed pipeline at the given interval.

//

// This package is commented in such a way to make it easy to deduce what is going on, making it

// a perfect example of how to build other extensions.

//

// What is going on below is relatively simple:

// - All extensions are run as long-running containers.

// - We create our extension as just a regular program, paying attention to what we want our variables to be

// when we install the extension and when a pipeline subscribes to this extension.

// - We assimilate the program to become a long running extension by using the Gofer SDK and implementing

// the needed sdk.ExtensionServiceInterface.

// - We simply call NewExtension and let the SDK and Gofer go to work.

package main

import (

"context"

"fmt"

"strings"

"time"

// The proto package provides some data structures that we'll need to return to our interface.

proto "github.com/clintjedwards/gofer/proto/go"

// The sdk package contains a bunch of convenience functions that we use to build our extension.

// It is possible to build a extension without using the SDK, but the SDK makes the process much

// less cumbersome.

sdk "github.com/clintjedwards/gofer/sdk/go/extensions"

// Golang doesn't have a standardized logging interface and as such Gofer extensions can technically

// use any logging package, but because Gofer and provided extensions use zerolog, it is heavily encouraged

// to use zerolog. The log level for extensions is set by Gofer on extension start via Gofer's configuration.

// And logs are interleaved in the stdout for the main program.

"github.com/rs/zerolog/log"

)

// Extensions have two types of variables they can be passed.

// - They take variables called "config" when they are installed.

// - They take variables called "parameters" for each pipeline that subscribes to them.

// This extension has a single parameter called "every".

const (

// "every" is the time between pipeline runs.

// Supports golang native duration strings: https://pkg.go.dev/time#ParseDuration

//

// Examples: "1m", "60s", "3h", "3m30s"

ParameterEvery = "every"

)

// And a single config called "min_duration".

const (

// The minimum interval pipelines can set for the "every" parameter.

ConfigMinInterval = "min_interval"

)

// Extensions are subscribed to by pipelines. Gofer will call the `subscribe` function for the extension and

// pass it details about the pipeline and the parameters it wants.

// This structure is meant to keep details about those subscriptions so that we may

// perform the extension's duties on those pipeline subscriptions.

type subscription struct {

namespace string

pipeline string

pipelineExtensionLabel string

quit context.CancelFunc

}

// SubscriptionID is simply a composite key of the many things that make a single subscription unique.

// We use this as the key in a hash table to lookup subscriptions. Some might wonder why label is part

// of this unique key. That is because extensions should expect that pipelines might

// want to subscribe more than once.

type subscriptionID struct {

namespace string

pipeline string

pipelineExtensionLabel string

}

// Extension is a structure that every Gofer extension should have. It is essentially a God struct that coordinates things

// for the extension as a whole. It contains all information about our extension that we might want to reference.

type extension struct {

// Extensions can be run without "initializing" them. This allows Gofer to run things like the installer without

// having to pass the extension everything it needs to work for normal cases.

// It might be useful to track whether the extension was initialized or not.

isInitialized bool

// The lower limit for how often a pipeline can request to be run.

minInterval time.Duration

// During shutdown the extension will want to stop all intervals immediately. Having the ability to stop all goroutines

// is very useful.

quitAllSubscriptions context.CancelFunc

// The parent context is stored here so that we have a common parent for all goroutines we spin up.

// This enables us to manipulate all goroutines at the same time.

parentContext context.Context

// Mapping of subscription id to actual subscription. The subscription in this case also contains the goroutine

// cancel context for the specified extension. This is important, as when a pipeline unsubscribes from a this extension

// we will need a way to stop that specific goroutine from running.

subscriptions map[subscriptionID]*subscription

// Generic extension configuration set by Gofer at startup. Useful for interacting with Gofer.

systemConfig sdk.ExtensionSystemConfig

}

// Init serves to set up the extension for it's main functionality. It is needed mostly because we sometimes need

// extensions to launch, but not actually serve all requests(like when we're running the extensions install endpoints).

//

// The Gofer server when launching an extension will call the Init endpoint and then wait until

// a successful response is returned to mark the extension ready to take subscriptions.

func (e *extension) Init(ctx context.Context, request *proto.ExtensionInitRequest) (*proto.ExtensionInitResponse, error) {

minDurationStr := request.Config[ConfigMinInterval]

minDuration := time.Minute * 1

if minDurationStr != "" {

parsedDuration, err := time.ParseDuration(minDurationStr)

if err != nil {

return nil, err

}

minDuration = parsedDuration

}

e.parentContext, e.quitAllSubscriptions = context.WithCancel(context.Background())

e.minInterval = minDuration

e.subscriptions = map[subscriptionID]*subscription{}

config, _ := sdk.GetExtensionSystemConfig()

e.systemConfig = config

e.isInitialized = true

return &proto.ExtensionInitResponse{}, nil

}

// startInterval is the main logic of what enables the interval extension to work. Each pipeline that is subscribed runs